Google Search Console is a platform that allows you to gain key organic search insights as well as a greater understanding of Google’s indexing process.

It was once known as “Google Webmasters Tools” and was updated in 2015 to include a far greater range of users. Indeed, today Search Console can be used by SEOs, Designers, Developers, Business owners and digital marketers.

On the surface, Search Console allows us to optimise the content of our website to increase website traffic, understand the device and location of our users as well as fixing navigation errors and crawling bugs.

Verify Your Account

The first step to using Google Search Console is to verify your account. In other words, we need to prove to Google that we are the administrators for our website, to access sensitive information.

There are several ways that a website can be verified. For instance, Google may require you to upload a file to your website, add a line of code to your website or verify via DNS Setting, Google Analytics or Google Tag Manager. Google provides excellent documentation for all the various ways to verify your website.

Performance – Get Insights to Organic Search Performance

Organic Search Performance | Google Search Console

The key feature of Search Console is the search performance dashboard, which provides you with top-level metrics such as clicks, impressions, click-through rate and average position. We are also provided with a graph which can be used to highlight spikes in organic search performance over a given timeframe. This is the key section of Google Search Console and the majority of your time will be spent here.

Another key aspect of the dashboard is the Query breakdown of all the keywords that have been used on Google to find your website. Getting provided with this data can allow us to spot potential keyword opportunities and amend the content of our website to attract more traffic from a specific keyword. On the other hand, if we compare the data month on month, we can spot any keywords that are not performing well and cut our losses early on.

Keyword Performance Breakdown | Google Search Console

Finally, we are given additional user metrics such as the country of origin for each user, so we can see how well the website performs internationally. Also, we are provided with the device type, so we can see how the website performs on different devices such as mobile, tablet and desktop. Having the ability to view your queries on smartphones allows you to improve your mobile targeting more specifically. We can also view the traffic by search type e.g. web or image, so we can see the traffic for pages and image content.

If you would like some inspiration for new keyword ideas, then I would recommend the Google Keyword Planner tool.

Index – Upload a Sitemap

A useful feature of Google Search Console is the ability to upload an XML sitemap to Google. An XML sitemap is a file that contains a list of all the main URLs of a website. Think of it as a roadmap that points Google in the direction of all the most important pages of your website. Uploading the list of URLs to Google allows the most important pages to get indexed by Google and appear in its search results.

Uploading a sitemap can be advantageous when the content of a website is always changing and new pages need to get indexed. In addition, some pages may be isolated in terms of internal linking, so it may be a challenge for Google to index, therefore adding the URL to the sitemap alleviates this issue. Finally, Google has a limit for the number of URLs that can get indexed at one time during a crawl, so the sitemap allows you to specify a list of the most important URLs.

For the sitemap to work, it must be uploaded to https://your-website.com/sitemap.xml. Google has detailed help on how to create and upload your sitemap.

Index – Validate Your Robots.txt

Robots.txt Testing Tool | Google Search Console

The robots.txt file on your website informs search engines which pages it should crawl. Equally, it can tell search engines which pages it should not crawl.

The syntax of the robots.txt is started with the user agent, which is the spider that is indexing your website e.g. Google. A star means, all user agents. The disallow function allows us to take a URL directory out of Google’s search listings e.g. Disallow: /wp-admin/ would remove the login page of WordPress. It is worth noting that you can exclude a folder from Google’s search listings, then include a folder within that folder e.g. you may wish to hide the Assets folder of your website, but you may wish to show the images in the listings. As a result of the allow /assets/images getting declared after the disallow /assets/, then the images folder is included.

If we have two rules that can conflict with each other, it is desirable to test whether or not a specific URL is indexed by Google. Google Search Console has a robots.txt testing tool that can alleviate this problem. In the tool, we can enter a specific URL and a user agent and test whether it can be crawled or not. The robots.txt testing tool also will allow you to test that the file has been uploaded to the correct place on your server. For your robots.txt to work, you need to ensure the file is uploaded to: https://your-website.com/robots.txt/.

Additional functionality of the robots.txt is to declare where the XML Sitemap is located, which can be useful if you do not store the file in the recommended location. The crawl-delay feature tells the crawler to wait a set number of seconds before beginning the crawl of each page. This is desirable because the Yahoo and Bing crawlers can often send many requests to the server at once and can slow the performance of your website if the pace of crawling is not moderated.

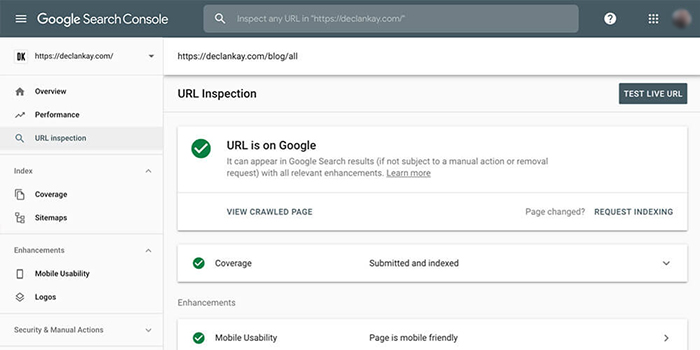

Index – URL Inspection and Request Indexing

URL Inspector Page is not on Google | Google Search Console

As we discussed previously, Google uses robots to crawl your website and add each of the publicly available pages to its index and then shows these pages in the search results. Having as many high-quality pages in Google’s index is a critical part of your organic SEO strategy.

Pages of your website can get blocked from Google’s index for various reasons: blocked by robots.txt, it has a noindex tag or maybe it is a new page that Google is yet to discover. In each of these cases, it is desirable to know if Google can index a specific page, which is where the URL Inspection tool comes in.

Simply enter the URL of the page that you wish to test into the Inspect bar at the top. With this tool, we can see what information Google currently knows about the page. It can also tell us why Google could, or could not, index the page, which can be handy if there are any indexing problems. Additionally, Google will attempt to render your page in a preview image. Should the page fail to render, it can suggest issues with JavaScript code or the page cannot be crawled properly.

URL Inspector Page is on Google | Google Search Console

The XML Sitemap and Robots.txt are great ways to allow Google to index your page. That said, Google Search Console allows us to manually submit a page of your website to Google, which is called “Request Indexing”. This can be a useful tool if you have recently added a new page to your website and you wish to speed up the indexing process. This tool will also work for existing URLs, should the content of a page change.

Enhancements – Mobile Usability

Mobile-Friendly Pages Report | Google Search Console

Google announced in 2018 that mobile friendly websites are boosted in the search result rankings.

The mobile usability report flags problems on your website for users on mobile devices. The summary page shows a chart that classifies each of the pages of your website as either “valid” and is mobile friendly or as “error”, where a problem has been encountered. This view can be useful when analysing mobile usability over a period of time.

Common mobile usability checks include ensuring that text is large enough to be read and that content is not wider than the screen. More elaborate checks include the ability to check the viewport is set to “device-width”, which allows browsers to adapt the website to the width of the device. Please note that this is not an exhaustive list. To ensure that you have a comprehensive check on your mobile performance, then I’d recommend the Mobile-Friendly Test Tool.

Security & Manual Actions

A manual action is when Google has found issues with your website that do not comply with the webmaster’s guidelines. Manual actions are required to be fixed, as failure to do so will cause a page to get demoted in the rankings, or removed altogether.

Manual actions are flagged by Google when they think your website is spam or is using black hat methods to artificially improve your rankings. Common problems that can get flagged are excessive use of spammy content, such as links to discount pages on online shops. Pages with thin content or no content at all can be highlighted as illegitimate content. Finally, other issues that can get uncovered are keyword stuffing, image cloaking and structured data issues.

The Security section is where Google will highlight any security issues that it has found on your website. The report will provide a list of any security issues that you have, with a link to the specific page where the issue was discovered.

For each error, we are given a report, which describes the issue in detail and provides a solution as to how we should fix the error. Failure to fix the error will see your website demoted in the search rankings or removed altogether if Google thinks you are putting users at risk.

Some of the most common issues that are uncovered in this section are phishing, SQL injection, cross-site malware and code injection. Once the issue is fixed, Google offers a reconsideration service, which allows is to submit a page for indexing, if it was removed before.

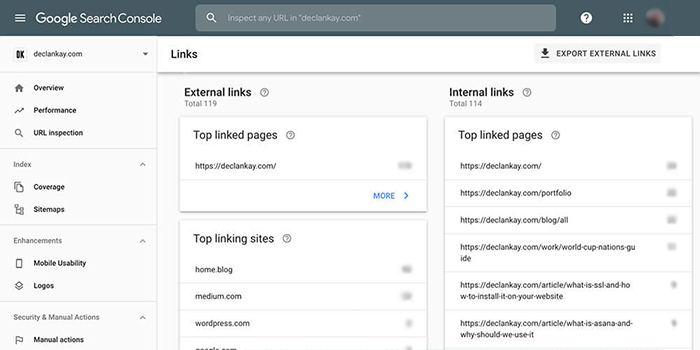

Links – Assess External Linking

Internal and External Linking | Google Search Console

The Links section is divided up into two sections: Internal Links and External Links. The Internal Links displays a report that lists the most linked-to pages within your website. Please note that these internal links have a source and destination inside your website. The URL that appears in the list is the destination URL or the page where the link takes the user to.

Internal linking can be useful because it makes it easier for users to navigate around your website, it also passes link equity around your website. The Internal Ranking report can be useful as it highlights which pages are linked to the most, and allows you to add more links to pages that you think should have more links. Each page should have no more than 100 internal links.

The External Linking report allows us to see the pages that external websites are linked to on our website. The report covers the page on your website that is getting linked to, the most common websites that link to your website, and finally the text used in the links that take users to your website. The breakdown of the websites that link to your website can be useful as it allows us to see where a portion of your traffic is coming from. This can highlight new opportunities for driving traffic towards your website by creating more important backlinks. Please note that the report is similar to the Referral channel on Google Analytics.

Conclusion

Overall, Google Search Console is the number one tool for monitoring and growing your organic presence online. It offers in-depth reporting that provides insights to your best-performing keywords as well as suggests where you could improve going forward.

Aside from keywords, Search Console enables unrivalled access to Google’s indexing process, which enables you to maximise your coverage online. Coupled with the usability and security features, Google Search Console allows your website to be performant and safe for all users. Sign up for free today, if you are ready to take your SEO to the next level.